Keep the Stack Current, Keep Agents in Bounds

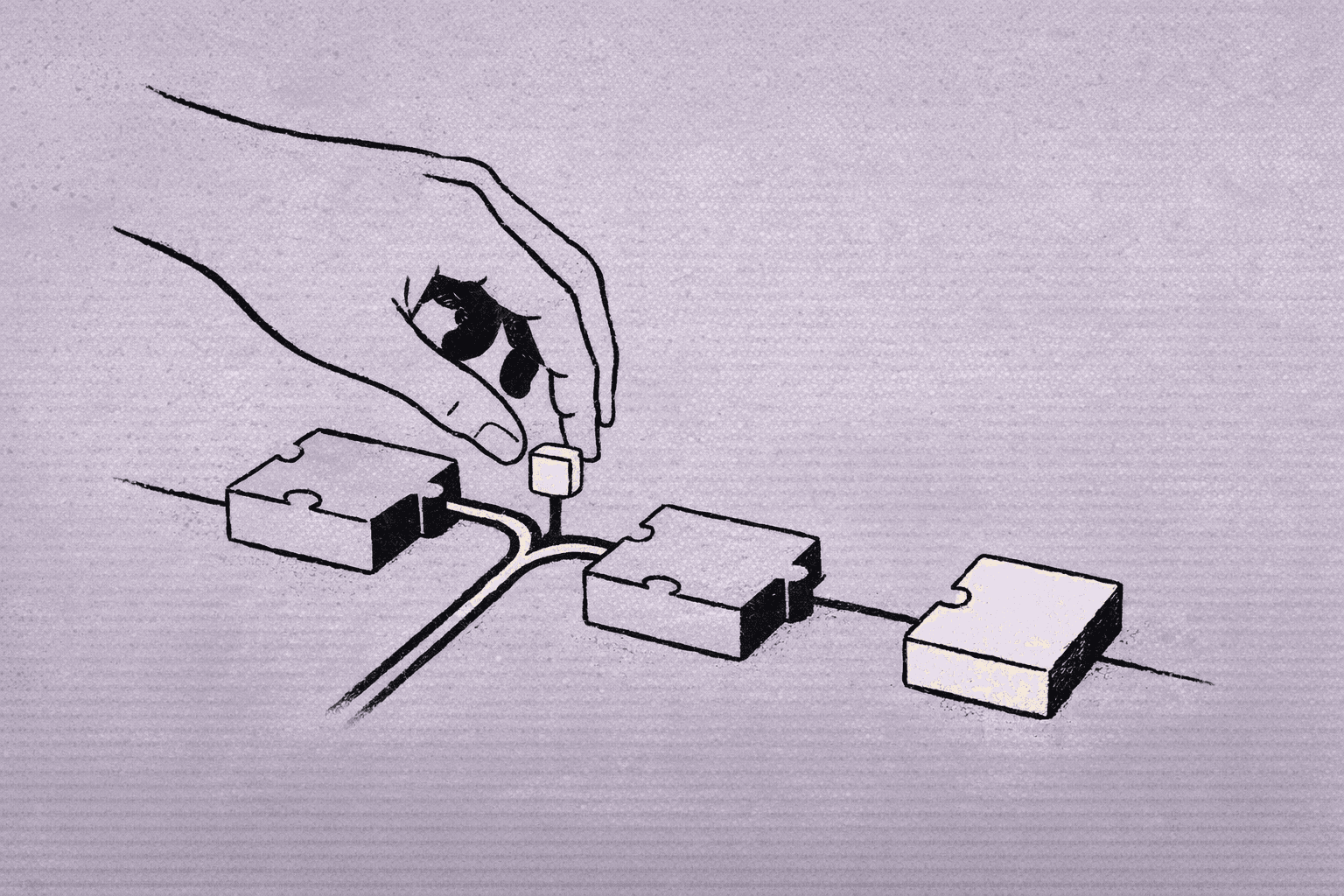

A practical look at refreshed dependencies and short rules that make AI coding easier to review.

A recent starter stack update is a useful reminder: most AI coding gains come from routine maintenance, not from adding another agent. The update bundled newer framework and UI dependencies, plus explicit rules for AI-assisted coding in common agent IDEs and CLIs. That mix matters more than the tools involved.

If the base stack is stale, agents spend time working around drift. If instructions are vague, they improvise in ways that are hard to review. If dependencies are pinned loosely, small changes can create large diffs. A refreshed stack cuts that friction before prompting starts.

What changed in practice

The update points to a broad cleanup: modernized Next.js, React, Tailwind, and component library setup, along with rules for AI coding tools. The exact package versions matter less than the shape of the change. It is a maintenance pass aimed at making the project easier for humans and agents to work in.

That is the right frame for teams. Treat the stack as the surface agents work on, not just the app runtime. When the surface is clean, the agent has fewer excuses to wander.

Why rules matter more than prompts

In agentic coding, the difference between a useful run and a messy one is often instruction quality. Rules give the agent stable constraints: where to look, what to avoid, how to format output, and when to stop.

That helps most in codebases with multiple entry points. A rule set can tell an agent to:

- prefer existing patterns over new abstractions

- keep changes small and local

- update tests when behavior changes

- avoid unrelated files

- ask before structural edits

Those instructions are plain, and that is the point. They keep review time down.

A practical workflow for teams

If you want this pattern to hold across tools, build it as a workflow rather than a one-off prompt.

Start with a dependency audit. Identify packages that affect build behavior, styling, routing, and test execution. Update them together only when you can verify the app still boots and the main paths still pass. Mixed-version stacks are where agents lose time.

Then write tool-agnostic rules. Keep them short and concrete. Focus on file scope, test expectations, and change size. Avoid policy language. Agents do better with operational constraints than with philosophy.

Next, define a review loop. A good loop is: agent proposes, human checks, tests run, then the change lands. If the agent chains too many edits before review, the diff gets harder to reason about.

Finally, keep one place for project conventions. That can be a short markdown file, a repo rule file, or both. The format matters less than consistency.

Tradeoffs and limits

This approach is not free.

A tighter stack can slow initial setup. Upgrading several dependencies at once can expose unrelated breakage. Rules can also go stale if the codebase changes faster than the instructions do. And if the rules are too strict, agents may stop being useful for exploratory work.

There is also a maintenance cost in keeping the rules readable. Long instruction sets tend to decay into noise. Shorter rules are easier to enforce, but they may miss edge cases. Teams need to decide where they want the burden: in the prompt, in review, or in tests.

The source material also appears to be a product update, not a controlled study. So the safe conclusion is not that this exact stack is universally better. It is that modern dependencies plus explicit agent rules are a sensible baseline for teams that want fewer surprises.

What to copy across tools

The useful pattern is portable.

Whether you use an IDE agent or a CLI agent, the same three things help:

- a current stack with fewer compatibility gaps

- short rules that describe expected behavior

- a review and test loop that catches drift early

That is enough to improve most coding sessions without changing the model or the interface.

A small methodology note

This kind of update belongs in the Document step. If the rules are not written down clearly, they will not survive the next tool change or team handoff.

Bottom line

The strongest signal here is not the dependency list. It is the operating model. Keep the stack current, keep the rules short, and keep the agent’s scope narrow. That combination is unremarkable, but it is what makes AI coding tools hold up in real work.

Want to learn more about Cursor?

We offer enterprise training and workshops to help your team become more productive with AI-assisted development.

Contact Us